How to Run a Design Sprint: Process, Cost and What You Get (2026)

925studios

AI Design Agency

How to Run a Design Sprint: Process, Cost and What You Get

Reviewed by Yusuf, Lead Designer at 925Studios

A design sprint compresses months of product decision-making into five days. By Friday afternoon, your team knows whether an idea is worth building, based on real user reactions to a working prototype, before a single line of production code is written. Jake Knapp, who created the sprint method at Google Ventures, puts it this way: "The sprint gives you a superpower: you can fast-forward into the future to see your finished product and customer reactions, before making any expensive commitments." That is the core promise, and it holds up when the process is run correctly.

TL;DR:

A design sprint runs Monday to Friday, covering problem mapping, sketching, deciding, prototyping, and user testing

You leave with a tested prototype and a go or no-go decision, not just a strategy deck

External facilitation costs $5,000 to $15,000; a full agency-run sprint runs $25,000 to $50,000

Internal sprints cost nothing in fees but consume roughly 35 person-days of senior team time

The most common failure is skipping user testing on Friday, which turns a sprint into an expensive workshop

Quick Answer: To run a design sprint, you need five days, a cross-functional team of five to seven people, a clear problem to solve, and five real users scheduled for Friday testing. Day one maps the problem. Day two generates solutions independently. Day three picks the winner. Day four builds a realistic prototype in Figma or Keynote. Day five tests with real users. You end with data, not opinions. Budget $5,000 to $50,000 depending on whether you run it internally, hire a facilitator, or engage a full agency.

Why does running a design sprint matter for product teams?

The typical product team spends three to six months building a feature before finding out whether users actually want it. By then, the cost of being wrong is enormous: sunk engineering time, delayed roadmap, and a feature that gets quietly deprecated. Research from a 2020 primary study (Khalifeh, Rhine-Waal University) found that design sprint participants reported an average of 7x savings in time compared to their normal workflow for reaching the same quality of validated decision. The same study found all participants reported at least 2x return on money, including external facilitation costs, with two participants reporting 4x to 6x savings.

IBM applied design thinking methodology at scale and reported a 75% reduction in design and delivery time, compressing what had been eight-month cycles down to three to four months (IBM Design Thinking, cited in Voltage Control). Savioke, a hotel delivery robot startup, ran a design sprint that identified critical UX issues with how their robot communicated with guests. After one sprint and one implementation cycle, delivery completion rates increased by 60%. Blue Bottle Coffee used a sprint to design and validate their entire online sales channel before building it, avoiding months of misguided development.

The sprint is not a silver bullet. It works for product and experience problems where user feedback can resolve ambiguity quickly. It does not work well for operational problems, technical architecture decisions, or business model questions where the answer requires data over time rather than a single Friday of testing.

Not sure whether a design sprint is the right move for your current product challenge? Talk to our team and we can help you decide.

How do you run a design sprint step by step?

Step 1: Monday, Map the problem

Monday is not a brainstorming session. It is a structured investigation into a specific problem. The team assembles: you need a Decider (CEO, CPO, or whoever has final say), a Facilitator, and three to five team members who bring different perspectives, engineering, design, product, customer success, marketing. No more than seven people total. The group defines a long-term goal, then maps the user journey to identify where the problem lives. You conduct short interviews with internal experts, typically 15 to 20 minutes each, to surface constraints and prior knowledge that would otherwise emerge slowly over the week.

The day ends with a chosen target: one specific moment in the user journey that the sprint will focus on solving. This focus is what separates sprints from unfocused brainstorm sessions. Common mistake on Monday: treating the day as an open agenda. Sprints that do not set a specific target by end of day one almost always drift. The Facilitator's job is to close that decision before the room leaves.

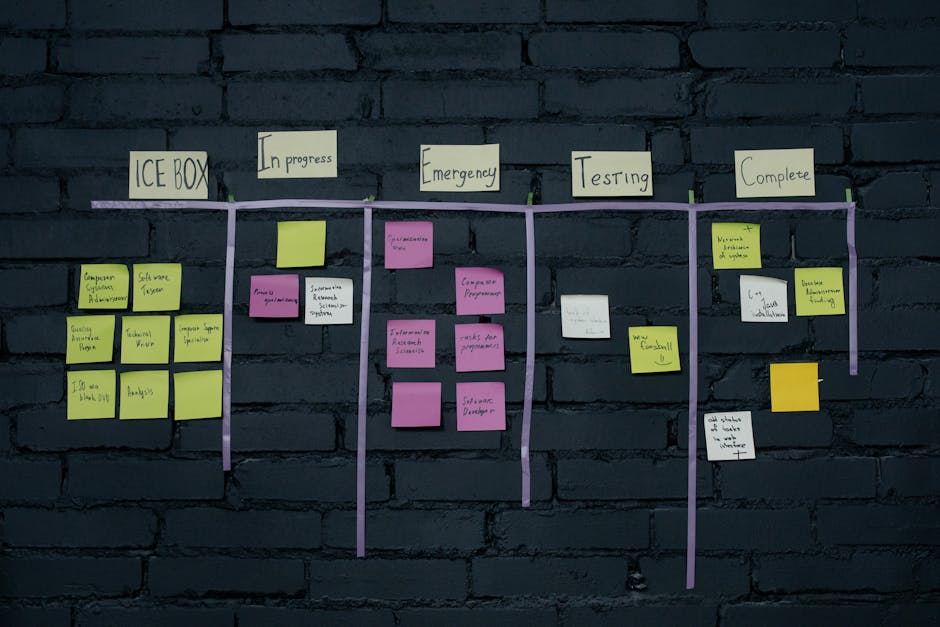

Tools: whiteboard, sticky notes, a printed or digital map of the user journey, a long-term goal statement visible to the full room.

Step 2: Tuesday, Sketch competing solutions

Tuesday is deliberately individual. Each team member works alone to sketch competing solutions to the target problem, following a four-step structured process: notes from Monday, preliminary ideas, Crazy 8s (eight rough sketches in eight minutes), and a final detailed solution sketch. No group presentation happens on Tuesday. The isolation is intentional. Research on groupthink consistently shows that independent generation produces more diverse solutions than group brainstorming, where the first idea anchors the room.

By end of day you have five to seven distinct solution sketches, each detailed enough to evaluate but not polished. The sketches do not need to be beautiful. They need to show the interaction logic. Common mistake: spending Tuesday refining one idea instead of exploring multiple. The point of sketching is divergence, not polish.

At 925Studios, when we facilitate sprints for SaaS clients, we often find that the winning sketch on Friday comes from an engineer or a customer success rep, not the designer. Independent sketching surfaces perspectives that would never emerge in a room where the designer talks first.

Step 3: Wednesday, Decide and storyboard

Wednesday uses a structured critique process, not a vote by committee. The team does a "heat map" review (anonymous sticky dot voting on elements they find interesting), then a fast critique of each sketch where the team notes strengths and concerns, then a "straw poll" to signal preference, and finally a final decision by the Decider. The Decider has the last word, even if the vote went another way. This prevents death by consensus while still using collective intelligence to inform the decision.

Wednesday afternoon is spent building a storyboard: a 10-15 panel comic strip showing the user's journey through the solution from first encounter to the key moment. This storyboard becomes the blueprint for Thursday's prototype. Also on Wednesday: recruit the five users for Friday testing. Finding five real users who match your target persona in 48 hours requires a pre-built recruitment list or a service like UserTesting.com. This step catches most teams off guard if they have not planned for it.

Common mistake: spending Wednesday in debate rather than decision. The Facilitator must enforce the time structure. A sprint that is still deciding on Thursday morning is already failing.

Tools: sticky dots for heat map voting, a shared storyboard canvas, a user recruitment message ready to send by end of day.

Step 4: Thursday, Build the prototype

Thursday is prototype day, and the key principle is to build only what users will touch on Friday. Not a full product. Not a real system. A realistic-looking facade that simulates the critical interactions. The GV method calls this explicitly: "fake it." Figma is the standard tool for most digital products: screen-linked frames that simulate clicks and transitions without any real data. For physical or service products, teams have used Keynote, printed mockups, or even role-playing scenarios.

The prototype needs to pass what the GV team calls the "Goldilocks" test: realistic enough that users react as they would to a real product, but rough enough that it took one day to build, not one month. A prototype that is too polished gets feedback on aesthetics, not on whether the idea works. A prototype that is too rough confuses users before they can react to the concept.

Thursday also includes writing a simple interview script for Friday: five to seven open-ended questions, a task to complete in the prototype, and probing questions at the end. Common mistake: building too much. Teams that try to prototype the full product run out of time and arrive Friday with a broken or incomplete experience. Scope ruthlessly.

Want to see what a sprint prototype typically looks like before investing in one? Browse our case studies to see the kinds of prototypes our clients validate.

Step 5: Friday, Test with real users

Friday is why the sprint is worth running. Five users. Five 1-on-1 interviews, each 60 minutes. One person facilitates the interview (asking questions but not leading). The rest of the team watches a live stream or video feed from another room, noting observations on sticky notes. After five interviews, the team debriefs: what patterns emerged, where users got confused, what they responded to positively, and whether the core idea has been validated.

Five users is not a large sample. But Jakob Nielsen's research on usability testing showed that five users find 85% of usability problems in an interface. For a sprint, the goal is directional signal, not statistical certainty. By interview three or four, the team watching from the back room is already seeing consistent patterns. By interview five, there is almost always enough signal to make a decision.

After Friday, you have one of three outcomes: clear validation (build it), clear failure (learn and move on), or partial signal (specific elements worked, others did not, worth another sprint or a targeted redesign). The debrief documents the decision rationale so it does not evaporate over the weekend.

Common mistake: skipping Friday entirely. Teams that run Monday through Thursday and then present the prototype internally at a meeting have run an expensive workshop, not a design sprint. The user testing is the whole point.

How much does a design sprint cost?

The honest cost breakdown that most articles avoid:

Sprint Type | Direct Cost | Hidden Cost | Best For |

|---|---|---|---|

Internal team only | $0 in fees | ~$28,000-$42,000 in opportunity cost (7 people x 5 days at $800-$1,200/day blended rate) | Teams with a trained facilitator on staff |

External facilitator | $5,000-$15,000 facilitation fee | Internal team time still consumed | First sprint, high-stakes decisions |

Full agency-run sprint | $25,000-$50,000 | Minimal internal time required | When the team cannot block a full week |

Remote / async sprint | $8,000-$20,000 | Coordination overhead, longer timeline (6-10 days) | Distributed teams, tight schedules |

Sourced from Design Sprint Ltd pricing data and Voltage Control agency rate research (2025-2026).

The internal-only sprint looks free but it is not. Blocking seven senior people for five days removes them from revenue-generating or product-shipping work. If your CEO, CPO, and three engineers are in a sprint room, you are spending $35,000 to $40,000 in blended opportunity cost regardless of whether you pay anyone for facilitation. That cost is only worth it if the sprint saves you from building something that would cost far more to build and then abandon.

When we run design sprints for clients at 925Studios, the full-service sprint includes pre-sprint problem framing, facilitation across all five days, a Figma prototype, user recruitment, and a Friday debrief document. Most clients find that the structure we bring reduces the risk of the most expensive sprint failure mode: a week spent on the wrong problem.

What are the most common design sprint mistakes?

Mistake 1: The wrong Decider in the room

The Decider role only works if it is filled by someone with actual authority. Teams that bring a middle manager as the Decider often find that Wednesday's decisions get undone the following week when the CEO sees the prototype. The sprint requires the real decision-maker in the room for the full week. This is why many sprints start with executive buy-in work before the sprint itself, getting the CEO or CPO to commit five days before you block the team's calendars.

Mistake 2: Solving the wrong problem

Monday's problem mapping is where sprints succeed or fail before they start. Teams that skip the expert interviews or rush the target-setting exercise often spend five days solving a symptom rather than a root cause. Slack's sprint focused on marketing site messaging because research showed new users were not understanding the product value before signing up. A team that simply assumed the UX was the problem would have spent the week on the wrong thing. Rigorous Monday work is not optional.

Mistake 3: Building too much on Thursday

Prototype scope creep is the most common execution failure. Teams that try to prototype edge cases, error states, and secondary flows run out of time and arrive Friday with a half-finished experience that cannot be tested. The prototype should cover exactly the critical path that appears in your storyboard, nothing more. Figma makes it easy to go further than you need to. Have a Facilitator enforce the scope boundary.

Mistake 4: Running sprints on the wrong type of problem

Design sprints are designed for experience and product problems where user feedback creates clarity. They are not effective for pricing strategy, technical architecture, hiring decisions, or market-entry choices. Teams that run a sprint on "how should we expand into enterprise?" will spend five days producing a prototype that cannot be tested with enterprise users in a single Friday session. Match the method to the problem type.

What do you actually walk away with after a design sprint?

At the end of Friday, your team has: a tested prototype (in Figma or equivalent), five user interview recordings or notes, a documented decision (go, no-go, or iterate), and a debrief document capturing what worked and what did not. The prototype is not production-ready, but it is often detailed enough to hand directly to engineering as a reference alongside the insights from user testing.

What you do not walk away with: a finished product, a final spec, or a guarantee of success. The sprint output is a validated hypothesis, not a launch. Teams that treat the sprint prototype as a final deliverable skip the implementation and iteration work that makes it real. The sprint is the beginning of confident building, not the end of product development.

For teams running their first sprint, we recommend treating Friday's findings as input to a short iteration sprint the following week: fix the issues users surfaced, prototype the revised version, and test with two to three more users before handing to engineering. That second loop often improves the fidelity of the output significantly. Yusuf walks through how to structure this post-sprint iteration on the 925Studios YouTube channel with real examples from client work.

Frequently Asked Questions

How long does a design sprint take?

The original GV format runs Monday through Friday, five consecutive days. Some agencies offer a compressed four-day version by combining Monday and Tuesday into a single long day. Remote sprints often run six to ten days to accommodate time zones and async work between sessions. The five-day version is still the most effective for in-person teams because it maintains momentum and keeps context fresh across all decisions.

How many people do you need for a design sprint?

Five to seven people is the ideal range. You need a Decider (CEO, CPO, or senior stakeholder), a Facilitator, and three to five team members across design, engineering, product, and customer-facing roles. More than seven people creates coordination overhead that slows decisions. Fewer than five leaves the room without enough perspective diversity to catch blind spots during Monday's problem mapping.

Do you need an external facilitator to run a design sprint?

No, but it helps significantly for first-time teams and high-stakes decisions. An external facilitator keeps the process neutral, enforces time constraints, and prevents the Decider from dominating the room. Internal facilitators work well once a team has run two or three sprints and understands the flow. For your first sprint on a major product decision, the cost of external facilitation ($5,000 to $15,000) is modest relative to the decisions being made.

What is the difference between a design sprint and a hackathon?

A hackathon produces a working build. A design sprint produces a tested prototype. Hackathons reward speed of implementation and often generate functional but untested features. Design sprints prioritize validated learning over working code. The sprint deliberately avoids building anything real until Friday's user research confirms you are solving the right problem in the right way. Hackathons are output-oriented; sprints are decision-oriented.

When should you not run a design sprint?

Do not run a sprint when the problem cannot be tested with five users in a single Friday session, when the Decider cannot commit five consecutive days, when the team has no access to real users for Friday testing, or when the problem is operational or strategic rather than experience-based. Sprints also have diminishing returns when run more than once per quarter on the same team, a pattern sometimes called sprint fatigue.

How do you recruit users for Friday testing on short notice?

Recruitment starts on Wednesday, giving you 48 hours. Use your existing customer base first: a simple email asking for 60 minutes of feedback time with a calendar link converts well if your product has engaged users. For new user segments, UserTesting.com, Respondent.io, or a LinkedIn message to your target persona can fill slots in 24 to 36 hours. You need exactly five people who match your target customer profile. Avoid recruiting people who know your company well, they will fill gaps with assumption rather than surface real confusion.

What happens after the design sprint?

After Friday's debrief, the three most common paths are: validated idea moves to engineering with the prototype as a reference, failed idea gets shelved with documented reasoning (saving months of wrong-direction development), or partial validation triggers a focused second sprint on the specific element that did not work. The sprint output feeds directly into your product backlog as a prioritized, user-tested item rather than a speculative feature request.

Can you run a design sprint remotely?

Yes, and remote sprints have become standard since 2020. Tools like Miro or Figjam handle the whiteboarding and voting exercises. Video-based user testing via Zoom or Lookback replaces the in-person lab. Remote sprints typically need one additional day of buffer to account for async communication gaps between sessions. The core process is the same: map, sketch, decide, prototype, test. The facilitation demands are higher because the Facilitator cannot read the room physically.

Templates and resources to run your sprint

To run a design sprint, you need six things: a sprint checklist document for each day, a storyboard template (15 panel grid), a heat map voting sheet for Wednesday, a Figma starter file for Thursday's prototype, a Friday interview guide, and a debrief document template. The GV team publishes their official sprint resources at gv.com/sprint, including a free PDF summary of the full process. AJ&Smart, one of the most cited sprint facilitation agencies, publishes updated templates and facilitation guides at ajsmart.com.

For the Friday user testing, create a simple script: two to three warm-up questions about the user's context, one core task to complete in the prototype, and three to four probing questions about their experience. Keep the facilitator's language neutral. "What do you think this does?" tells you more than "Did you understand this feature?"

If you want to run a design sprint to validate your next product decision and want a structured approach without consuming five days of your entire leadership team, reach out to 925Studios. We facilitate sprints for SaaS, fintech, and AI product teams and can structure the process around your specific challenge.

If you're building a product and want a second opinion on your UX, talk to 925Studios. We work with SaaS, fintech, healthtech, web3, and AI startups.

See our work or book a free 30-minute call.

Follow us on Instagram and YouTube for design breakdowns and case studies.